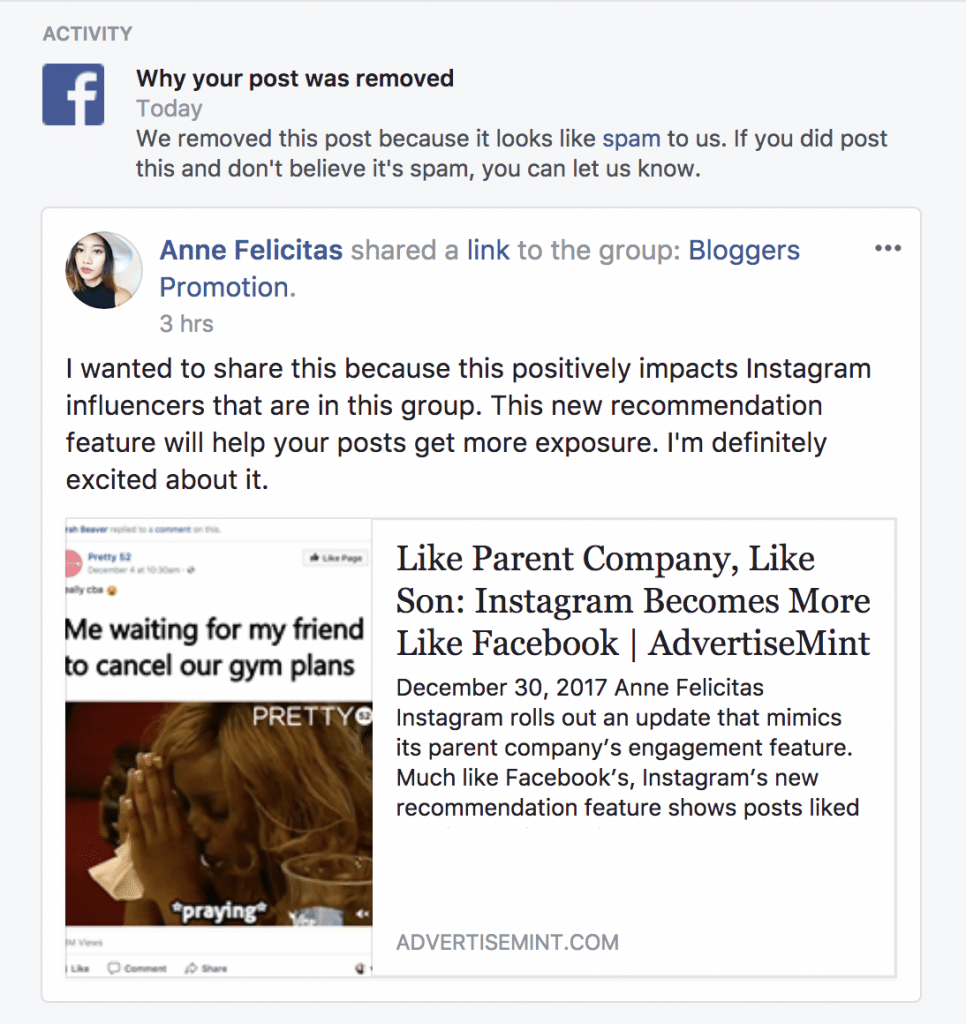

Immediately after posting a link to a group, I received a message from Facebook that made my heart plummet, as the guilty would when enduring a reprimand. “We removed this post because it looks like spam to us. If you did post this and don’t believe it’s spam, you can let us know,” the message said. When I posted the same link to three other groups, I instantly received the same message. I was perplexed. I had never posted spam on Facebook before. I was sure that the link I had posted was legitimate. It was to a website for a Facebook ads company that I had used in the past and had been happy with.

I decided to contact Facebook support to see if they could help me. I explained that I had not posted spam and that I believed the link I had posted was legitimate. The support representative I spoke to said that they would investigate the matter.

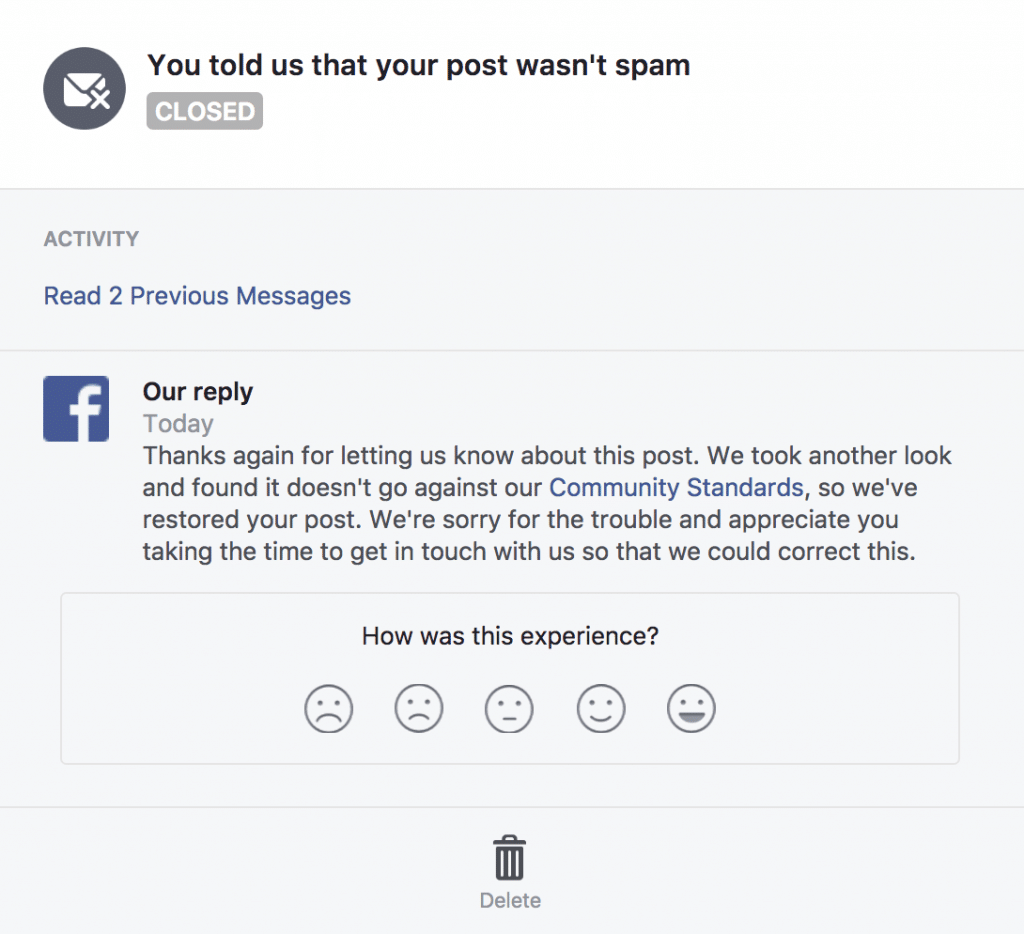

A few days later, I received an email from Facebook support saying that they had determined that the link I had posted was not spam. They apologized for the inconvenience and said that my posts had been restored.

I was relieved to hear that Facebook had corrected the mistake. I was also glad that I had taken the time to contact support. I learned that it is important to be proactive when you believe that your account has been wrongly flagged.

I also learned that it is important to be careful about the links you post on Facebook. Even if you believe that a link is legitimate, it is possible that it could be flagged as spam by Facebook’s automated systems. If you are unsure about a link, it is always best to err on the side of caution and not post it.

Here are some tips for avoiding your posts being flagged as spam on Facebook:

- Only post links to websites that you trust.

- Make sure the links you post are relevant to the groups or pages you are posting in.

- Avoid posting links to multiple groups or pages in a short period of time.

- If you are unsure about a link, don’t post it.

By following these tips, you can help to ensure that your posts are not flagged as spam on Facebook.

In the numerous times I’ve posted the same link to several different Facebook groups in one session, Facebook has never removed my posts. Nonetheless, eager to correct my transgression, I replied by clicking the it’s-not-spam button attached to the message. I then received an automated response that stated Facebook will review my post again.

Soon after its automated response, Facebook sent me another message: it recovered my posts because they weren’t spam after all. This news, although positive, left me perplexed. I wondered why Facebook thought my posts were spam in the first place.

According to Facebook Help Center, spam is “contacting people with unwanted content or requests. This includes sending bulk messages, excessively posting links or images to people’s timelines, and sending friend requests to people you don’t know personally.” My transgression may have fallen under excessively posting links to people’s timeliness, although in my case, groups. But how did Facebook know I violated its policy?

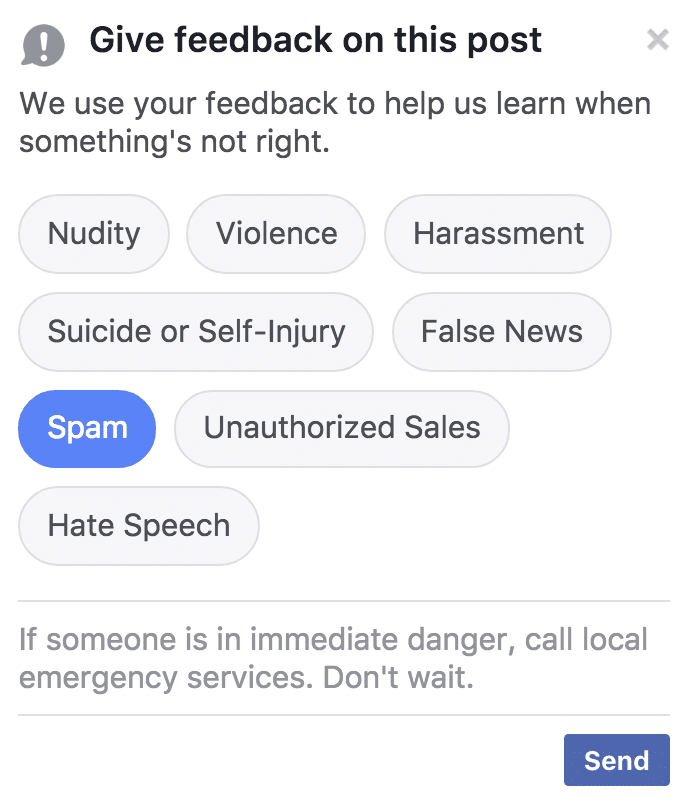

Facebook fights spam in two ways. One, it relies on the watchful eyes of users. When you spam timelines and news feeds, someone can report you to Facebook. Once Facebook employees receive that person’s report, they will investigate the case and contact you, keeping the reporter’s name and personal information confidential. Two, according to a Facebook representative, Facebook also uses automated systems to fight spam on its platform.

“We also implement automated systems to stop the spread of certain types of content that very clearly violate our Community Standards. For example, we use automation to recognize and stop spam attacks.”

Because it’s too much of a coincidence that different people from four different groups marked my posts as spam the second I published them, and because the messages I received from Facebook were instantaneous, as though they were automated, it’s much more likely Facebook’s automated system saw that I was posting the same links to multiple groups in one sitting, perceived my action as spam, removed my posts, and sent me a warning. But why did Facebook decide to restore my posts?

According to the same Facebook representative, Facebook also employs people who decide whether or not an action did violate Facebook’s Community Standards.

“Of course, a lot of the work we do is very contextual, such as determining whether a particular comment is hateful or bullying. That’s why we have real people looking at those reports and making the decisions.”

It’s likely after I responded to Facebook’s warning message, an employee looked into my case and decided my efforts weren’t spam after all.

In the past, relying on technology alone hasn’t been the best way to combat abuse on its platform. That’s why Facebook has the Community Operations team that resolves issues from spam to cyberbullying to hate speech to terrorist threats and why Facebook promised to hire 3,000 more reviewers last year when several violent videos emerged on its platform. But with a platform servicing billions of users, a platform buzzing with activity 24 hours each day, Facebook needs technology to help employees combat as much abuse on its platform as it can.