Gone are the days of mysterious account deletions. In a post to its press page, Instagram announced that it will warn users when their accounts are at risk of being disabled.

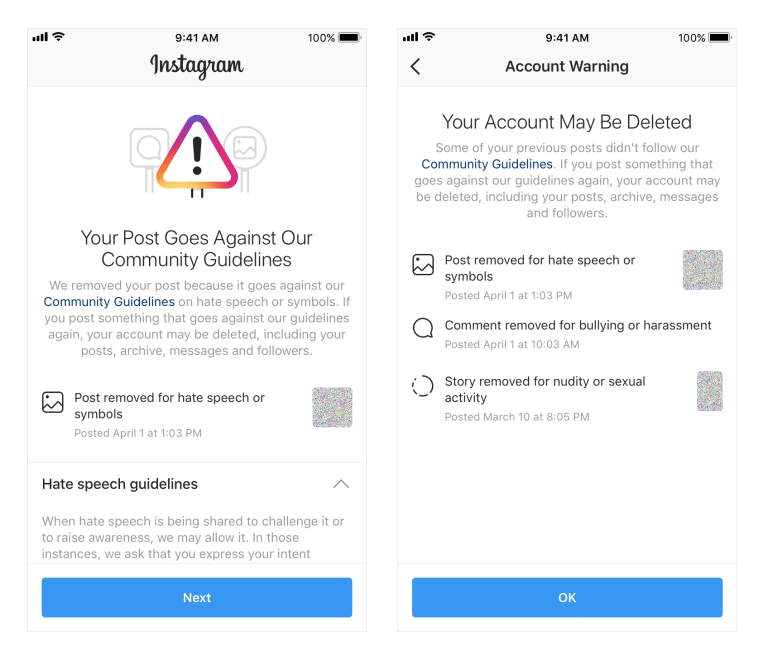

In this new notification process, Instagram will send users warning messages that their accounts may be deleted due to violations against Community Guidelines.

“Some of your previous posts didn’t follow our Community Guidelines. If you post something that goes against our guidelines again, your account may be deleted, including your posts, archive, messages and followers,” says Instagram’s message.

Underneath the message, Instagram will also include a timeline of the account’s previous violations, providing context behind the warning. Instagram will also provide an opportunity for users to appeal any deleted content within the notification, a far leap from the previous process, which required users to visit Instagram’s Help Center to file an appeal. If Instagram decides the post was flagged in an error, the post will be restored and the violation removed from the account’s record.

For now, Instagram’s notification will only contain appeals for violations related to nudity, pornography, bullying, harassment, hate speech, drugs, and terrorism. Instagram will expand the appeal to more categories in the future.

Instagram Updates Policy

In the wake of this new warning process comes a new policy, which Instagram is currently rolling out. According to the updated policy, Instagram will remove accounts with a certain percentage of violating content within a time frame. This allows the social media company to apprehend particularly active violators. For example, if an account with no previous history of deleted content suddenly began posting nudity within a 48-hour period, Instagram will remove that account.

“Similarly to how policies are enforced on Facebook, this change will allow us to enforce our policies more consistently and hold people accountable for what they post on Instagram,” says Instagram’s announcement.

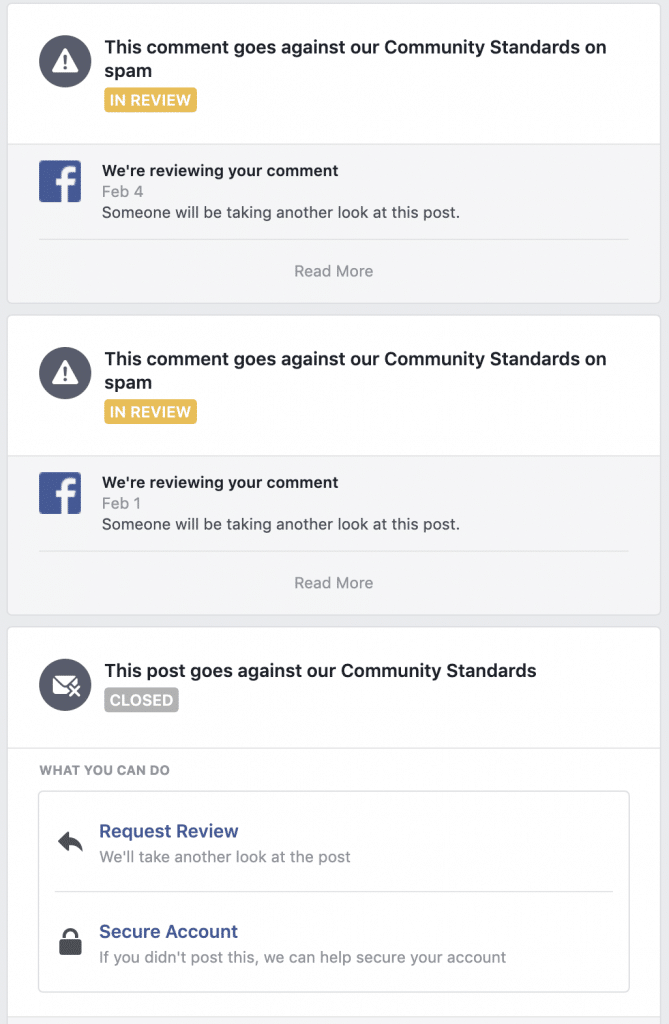

Instagram’s changes mimic Facebook’s current system. When users violate the social network’s Community Standards, whether by spamming a group or posting inappropriate images, Facebook will send notifications that contain a warning and an option to appeal the decision. Facebook keeps a record of violations and correspondences, which can be found under the “Support Inbox” section of users’ Facebook Settings.

Currently, Instagram does not have a section in Settings that allows users to review all of their violations. However, Instagram’s announcement did say that it will bring Help Center directly to Instagram, where users can appeal disabled accounts.

Steps to Greater Transparency

For years Instagram has irked many users by its banning method. Rather than warning them about their at-risk accounts, the social media company deleted violating profiles without prior notice, leaving users perplexed and horrified when they access their accounts to find them deactivated. By providing a warning before deletion, Instagram gives users a chance to salvage their accounts.

Instagram’s timeline of violations is certainly a step toward greater transparency. Previously, Instagram deactivated accounts without explanation. With the timeline, Instagram can help users understand the reason behind deactivation.

Written by Anne Felicitas, writer & editor